Last updated: April 9, 2026

Our agency conducted a research study on the rise of autonomous AI agents – their use cases, usage statistics, strengths, and weaknesses. Our original study began on January 14th, 2025 and we’ve subsequently updated it to include data up through April 2, 2026.

The study consisted of a survey of 8,128 agentic AI users to whom we asked a number of questions over a rolling 3 month period. We segmented the data we got back into 7 statistical categories:

Below, you can find the results of our study, which comprise some of the early research on how autonomous AI agents are being used by businesses and consumers.

The Top Autonomous AI Agents of 2026

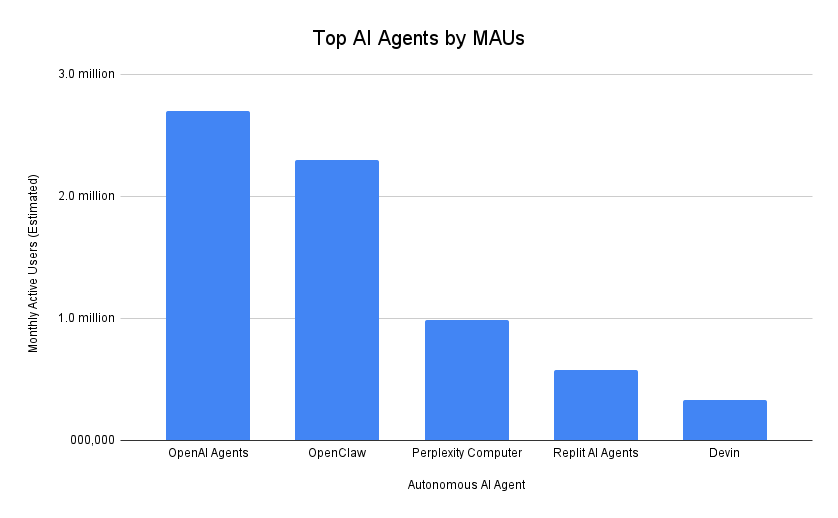

In this section, we list the top autonomous AI agents by number of active users as of Q1 2026. Monthly active users is the strongest indicator of user engagement and adoption, and the growth rate of MAUs over time reveals whether a platform is gaining traction in the market or losing momentum.

To create this list, we compiled user data from each of the most popular autonomous AI agents, using founder interviews, first-party published claims, and third-party research.

| Rank | Autonomous AI Agent | Top Use Cases | Model Families Used | Monthly Active Users (Estimated) | Quarterly Growth |

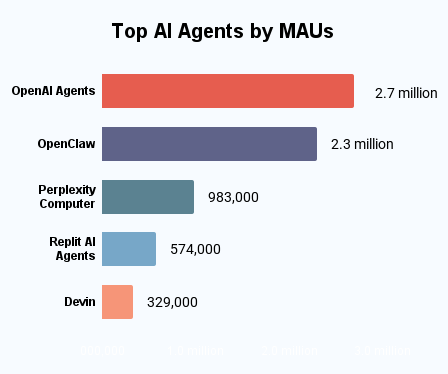

| 1 | OpenAI Agents (ChatGPT + API agents) | Research and synthesis, file workflows, customer support automation, content and SEO production, internal copilots | GPT | 2.7 million | +13% |

| 2 | OpenClaw | Lead generation and outreach, personal ops automation, cross-tool workflows, autonomous research agents, growth hacking and scraping | Model-agnostic with support for GPT, Claude, Gemini, Deepseek, and open-source models | 2.3 million | +9% |

| 3 | Perplexity Computer | Deep research, market and competitor analysis, quick decision support, learning and education, news monitoring | Multi-model stack with GPT, Claude, PPLX, and open-source models | 983,000 | +11% |

| 4 | Replit AI Agents | Build full apps from prompts, debugging and fixing code, automating scripts, deploying software, iterating on MVPs | Multi-model stack with GPT, Claude, Replit, and open-source models | 574,000 | +8% |

| 5 | Devin | End-to-end feature development, large refactors, bug investigation, engineering task delegation, documentation generation | Proprietary model stack tuned for software engineering | 329,000 | +10% |

| 6 | n8n AI Agents | Automated workflows, AI-powered pilelines, CRM and sales automation, data syncing, internal tooling | Model-agnostic with support for GPT, Claude, Gemini, and open-source models | 145,000 | +11% |

| 7 | Zapier AI + Agents | Business process automation, AI-enhanced triggers, lead management, content pipelines, notifications and orchestration | Model-agnostic with support for GPT, Claude, and other business automation-focused models | 78,000 | +9% |

| 8 | AgentGPT | Simple autonomous tasks, idea exploration, basic task chains, learning agent behavior, light automation | GPT | 41,000 | +7% |

| 9 | Superhuman | Inbox triage, auto-drafting replies, scheduling, follow-ups, summarization | Multi-model stack with GPT, Claude, and proprietary internal layers | 38,000 | +11% |

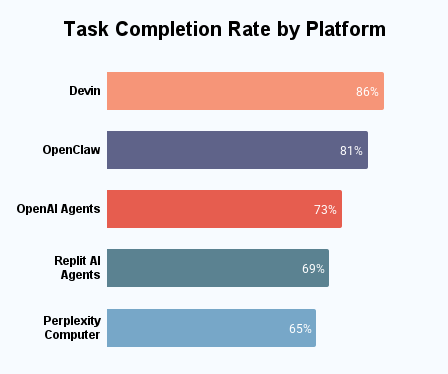

Task Performance and Completion Rates

A core focus of this study was evaluating agentic system performance on complex, multi-step tasks. Five types of tasks were assigned to 487 users, including itinerary planning, multi-vendor purchasing, financial budgeting, and comparative analysis.

| Platform | Task Completion Rate |

| Devin | 86% |

| OpenClaw | 81% |

| OpenAI Agents | 73% |

| Replit AI Agents | 69% |

| Perplexity Computer | 65% |

The mean completion rate across platforms was 75.3%. Devin led with 86% successful task completions without human intervention, followed by OpenClaw (81%) and OpenAI Agents (73%). Tasks such as single-vendor comparison and travel planning achieved the highest completion success (87%).

Tasks involving legal interpretations and niche SaaS comparisons showed the highest failure or partial-completion rates. Notably, only 18% of users felt the need to follow up on successful completions, indicating high trust in agent responses.

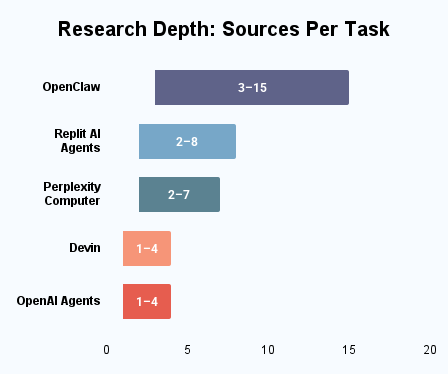

Research Depth: Sources Per Task

To assess whether autonomous AI agents truly provide academic-quality research support, users were asked to identify how many sources were cited by the platforms for each task. We also noted the minimum and maximum number of sources used by each AI agent across the entire experiment.

| Platform | Median Sources | Source Range | Notes |

| OpenClaw | 7 | 3–15 | Iteratively searches the web and other resources to fulfill complex objectives. |

| Replit AI Agents | 5 | 2–8 | Automates web tasks by navigating and extracting information from multiple web pages. |

| Perplexity Computer | 4 | 2–7 | Utilizes multimodal inputs, including visual and auditory data, to gather contextual information. |

| Devin | 2 | 1-4 | Primarily interacts with local applications and files; may access web sources if instructed. |

| OpenAI Agents | 2 | 1–4 | Processes user-uploaded files and data; may access additional sources if browsing is enabled. |

Our team’s main observation from this data was that the most-used AI agents tended to draw from the most sources; however, on average, today’s AI agents still fall short of robust research capability that would compare with a human researcher.

Trust Gap between Agentic and Manual Search

Trust is a key dimension of user satisfaction when people use AI agents for search & discovery tasks. We asked users to score their trust of manual results versus agentic results for the same tasks. The results were as follows:

| Trust Preference | Percentage of Users |

| Trusted Manual Results More | 54% |

| Trusted Agentic Results More | 34% |

| Trusted Both Equally | 13% |

Manual search results were more trusted by a significant margin (20 points). For users with technical backgrounds, the trust gap in favor of manual search widened to 37 points due to AI hallucination and weak citations.

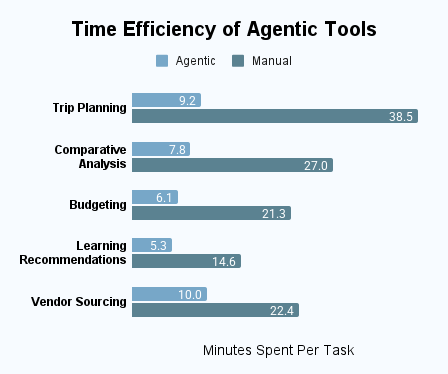

Time Efficiency of Agentic Tools

Time savings will be a key factor in the adoption of agentic AI agents by both businesses and individuals. We asked users to execute a range of tasks both manually and with an AI agent and compared the time spent in order to gauge the current state of agentic tools.

| Task Type | Agentic Time | Manual Time | Time Saved (%) |

| Trip Planning | 9.2 minutes | 38.5 minutes | 76% |

| SaaS Comparative Analysis | 8.7 minutes | 27.0 minutes | 68% |

| Budget Optimization | 6.1 minutes | 21.3 minutes | 71% |

| Learning Recommendations | 5.3 minutes | 14.6 minutes | 64% |

| B2B Vendor Sourcing | 10.0 minutes | 22.4 minutes | 55% |

The average time savings across all tasks when comparing the use of an AI agent vs manually completing the task was 66.8%, highlighting one of the clearest benefits of agentic AI.

Most-Refused Agentic Task Types

As much as we hope to rely on AI agents, they won’t do everything. High task refusal rates will pose a significant barrier to adoption of agentic AI tools and conversely, will also ensure ongoing need for additional human involvement in industries such as law and medicine. Our study found that approximately 8.9% of user requests were rejected outright by agentic platforms. The reasons most often involved ethical concerns, lack of sufficient information, or speculative content. The table below shares the most common types of rejected user requests.

| Task Type | Refusal Rate | Refusal Reason |

| Legal Counsel | 32% | Interpreting laws or offering personalized legal advice falls outside most AI agents’ regulatory boundaries, as doing so may constitute unauthorized practice of law. |

| Reverse Engineering | 21% | Reverse engineering AI algorithms, decompiling security or copyright-protected software, or analyzing proprietary firmware are all against most AI agents’ ethical and legal standards. |

| Financial Investment Guidance | 18% | Recommending specific stocks, constructing portfolios, or making personalized investment decisions is considered high-risk and typically restricted by AI agents to avoid violating financial regulations or offering unlicensed advice. |

| Speculative Predictions | 15% | Most AI agents discourage forecasting market trends, political outcomes, or future events, as it often leads to unreliable outputs and misrepresents the system’s capabilities. |

| Health Risk Assessments | 14% | Diagnosing conditions or offering personalized medical guidance is explicitly limited in most AI systems to comply with healthcare regulations like HIPAA or FDA guidance. |

Refusal rates varied across platforms, with Google Astra rejecting the highest percentage of queries tested at 11.4%, while Devin was the most permissive at 6.8%.

User Satisfaction by Task Type

We analyzed user satisfaction on a 1-10 scale (1 – very unsatisfied, 10 – very satisfied) for tasks in 6 categories in order to gauge how effectively AI agents completed tasks:

- Informational: Tasks wherein the AI agent is asked to provide defined information, such as simple definitional queries or explanations of topics that require little to no judgment

- Comparative: Tasks asking the AI agent to provide a comparison of two or more items

- Navigational: Tasks asking the AI agent to open another program or app and complete a subtask within that program or app

- Exploratory: Tasks that help with open-ended discovery or brainstorming

- Transactional: Tasks wherein the AI agent completes a purchase or another transaction

- Generative: Tasks wherein the AI agent creates documents, images, code segments, or other content

| Task Type | Example | Avg. Satisfaction (1–10) |

| Informational | “What is quantum computing?” | 8.3 |

| Comparative | “Compare the iPhone 16 to the iPhone 16 Pro” | 7.8 |

| Navigational | “Open Spotify and play my Release Radar.” | 7.6 |

| Exploratory | “What are some fun activities to do between meetings on a business trip to DC?” | 7.1 |

| Transactional | “Book a flight from JFK to MIA on JetBlue next Tuesday morning.” | 6.3 |

| Generative | “Create a calculator that tells me the ROI a company would get from switching its CRM.” | 5.8 |

In our study, informational tasks scored highest, largely because the algorithms for basic information discovery have been worked out through mass generative AI chatbot usage since December 2022. Tasks requiring novel content generation and transaction scored the lowest due to frequent errors, as well as agentic AI’s relative newness, leading to relatively less training & personalization of agentic AI systems.

Further Reading

- Top Generative AI Chatbots by Market Share

- Which Industries Use Generative AI To Make Purchases?

- ChatGPT Usage Statistics

- Generative AI Statistics